Nothing Looked Wrong.... at First

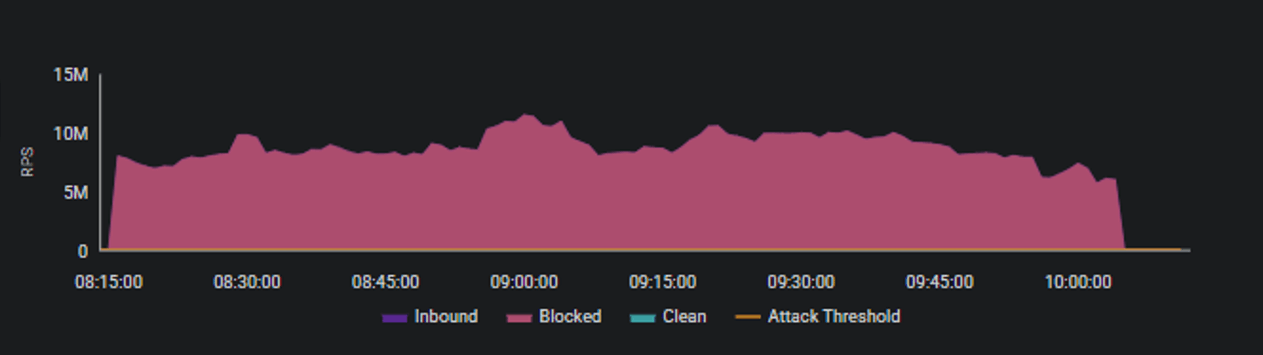

At 07:42 on a weekday morning, nothing looked wrong. Requests flowed normally. Services responded as expected. Monitoring dashboards showed green. Thirty minutes later, a public financial service supporting several million beneficiaries across EMEA was under extreme pressure from nearly 10 million encrypted requests every second. There was no breach, no exploit, and no stolen credentials. Just traffic that looked entirely legitimate. Volumes at this level are almost never sustained. In most cases, they spike briefly and collapse. Which is what the security teams expected. This one did not. The flow held steady at an intensity few organizations ever experience, and it continued that way for more than an hour. That endurance, more than the peak itself, became the most stressful part of the attack.

Figure 1: graph of incoming HTTPS request volume measured in requests per second over time.

This was not a one-off event. Over the past months, several large-scale Web DDoS attacks followed the same pattern. A sustained thirty-minute HTTPS flood reaching roughly one million requests per second against a major European public financial institution. An additional hour-long attack peaking near five million requests per second. In every case, services remained available not because the attacks lacked scale, but because mitigation was precise and selective.

Autonomy powered by AI Changed the Rules

These incidents point to something larger than individual attack campaigns. They reflect a structural shift in how denial-of-service is executed and why it is becoming harder to manage. Web DDoS is no longer primarily about overwhelming infrastructure. It is about exploiting trust, scale, and speed.

What makes these attacks different is autonomy. Attackers increasingly rely on automated decision-making systems that observe how applications respond and adjust behavior continuously. This is where agentic AI connects directly to Web DDoS. Not as a futuristic concept, but as a practical way to sustain pressure without constant human control.

The Blind Spot We Built For Them

Encryption amplifies the challenge. Nearly all modern application traffic is encrypted, which protects users but limits visibility. According to Cybersecurity Insiders industry report, fifty- five percent of security teams say encrypted traffic is now their biggest detection blind spot. This is not a tooling failure, it is the outcome of modern architecture meeting adversaries who understand how to hide inside what looks normal.

The Dangerous Duo

Together, autonomy and encryption explain why Web DDoS is surging at the application layer. These attacks not only aim to crash systems instantly but simultaneously persist long enough to exhaust assumptions built into traditional defenses.

For executive leaders, the message is clear. Resilience can no longer be measured only by uptime. It must account for how services behave under sustained, legitimate looking pressure. The most dangerous attacks ahead will not announce themselves. They will arrive encrypted, adaptive, and indistinguishable from success until defenders fall behind.