In 2023, a transformative event reshaped the technology landscape: the emergence of artificial intelligence (AI) into the mainstream. This phenomenon was not just about AI as a broad domain; it was the rise of Large Language Models (LLMs) and, specifically, Generative Pre-trained Transformers (GPT) that marked a turning point. LLMs, a subset of the wider generative AI category, brought a new dimension to content creation, extending to text, images, videos, and music. This development, heralded at CES 2024, signaled that generative AI would soon become an integral part of our daily lives.

As AI stepped into the limelight, its potential for both constructive and destructive uses became increasingly apparent. Generative AI applications began to proliferate, and alongside their growth, new challenges in preventing misuse emerged. One significant issue was AI prompt hacking, where users, both with good and bad intentions, manipulated AI models into performing tasks outside their intended scope. This phenomenon highlighted the need for robust guardrails in AI systems to deter exploitation.

Simultaneously, the open-source community saw the rise of private GPT models on platforms like GitHub. These models often lacked the comprehensive safeguarding measures implemented by commercial providers, leading to the creation of underground AI services. These services offered GPT-like capabilities, optimized for more nefarious purposes, and without ethical constraints, presenting a new toolkit for threat actors.

The rapid evolution of generative AI, with advancements like Google's Gemini, a multi-modal generative AI system capable of interpreting and generating text, audio/voice, images, video, and code, poses new challenges. These tools have the potential to enable highly credible scams and deepfakes with minimal effort. Ethical providers are working to implement guardrails to limit abuse, but the risk of similar systems being exploited by malicious actors for large-scale spear-phishing and misinformation campaigns is a growing concern.

Despite their impressive capabilities, LLMs are not without limitations. At their core, they are colossal statistical language processors trained on vast internet data. Their proficiency lies in mimicking and reproducing information based on their training datasets. While they significantly aid in education and productivity, their capacity for critical thinking or generating entirely new ideas is limited. They operate by interpolating and finding the closest matches within their data realms, making them incredibly useful tools but not truly intelligent entities.

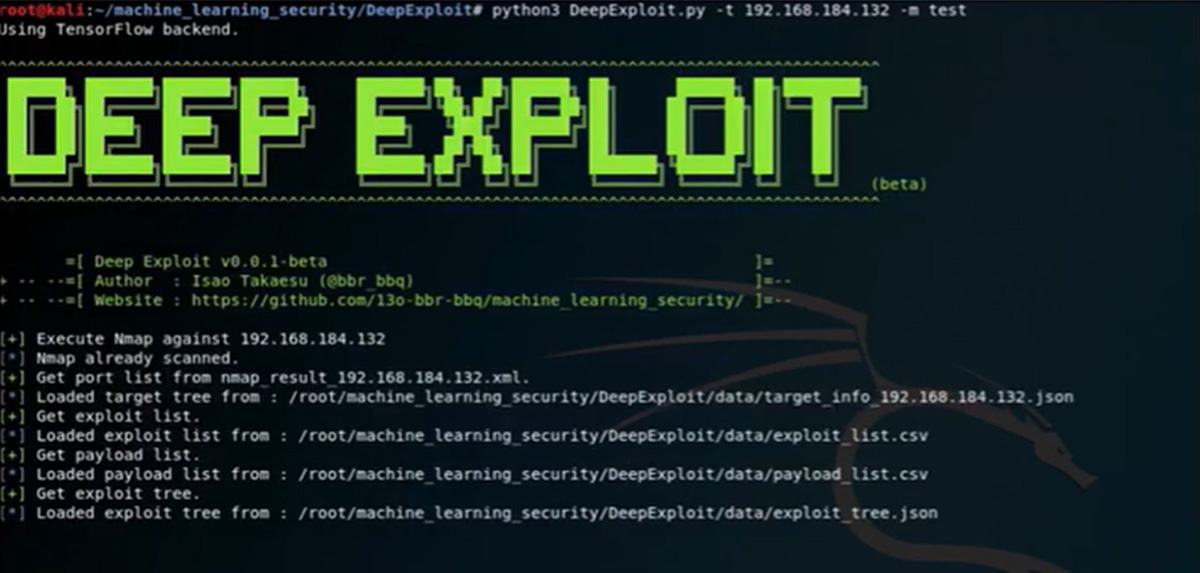

AI has been in use to automate vulnerability and penetration testing of online applications for several years. Tools have been readily available and working with varying levels of success. They have proven to be more capable than the current generation of GPT in developing new malicious payloads or finding vulnerabilities in web applications and APIs. DeepExploit, for example, which was presented at Black Hat in 2018, leverages reinforcement learning while DeepGenerator leverages Genetic Algorithms and a Generative Adversarial Network (GAN) to generate new payloads that can breach online applications. These tools have been effective in automated pen testing, provided they have unabridged access to the application and the application generates rich enough error messages, so the model has the information needed to progress its search. The issue for malicious actors is that these tools are very noisy. They generate a lot of random activity before they become even remotely effective and will be discovered as soon as web application and API protections detect their stochastic behavior.

While generative AI, in its present state, has limited use for threat actors besides increasing their productivity, other AI technologies might get a renewed boost from all the attention on recent advancements in the field of AI and the resulting advancements could have significant implications for cybersecurity as we know it. While the current state of generative AI offers limited utility for generating sophisticated payloads and attacks, it notably enhances the productivity of threat actors. This development parallels other AI technologies that might gain renewed attention due to recent advancements in the field. The cybersecurity community faces the challenge of evolving detection methods and controls to stay ahead of emerging threats.

We are in an AI arms race. Consider it as the modern version of the nuclear arms race during the cold war era. Military research and development have access to deep budgets in addition to the means and knowledge to advance new technology at a pace that could outstrip the cybersecurity community. Even if governments keep to their promise of being ethical in developing new applications and technologies, there is always that rogue player who goes one step too far, forcing the other players to keep up. The role of a global AI watchdog promoting ethical use of technology cannot be overstated here.

Tech companies like OpenAI and Microsoft hold a pivotal role in this landscape. They are at the forefront of balancing innovation with responsibility. The approach to preventing technology abuse is not through secretive innovation but through a commitment to keeping pace with technological advancements. The cybersecurity community must continually adapt to new tactics and techniques employed by cybercriminals, maintaining a technology race that has always been a part of the security paradigm.

Restricting access to AI systems to mitigate risks could hamper progress and innovation. The strength of a nation's cybersecurity posture is increasingly defined by its organizations' ability to defend against cyberattacks. In this context, open innovation becomes essential, necessitating a balanced approach to managing risks while fostering technological advancement.

Concluding thoughts

LLMs and GANs (Generative Adversarial Networks) are transforming the technological landscape. They not only enhance productivity and automate tasks but also create novel content, including new attack payloads. The AI journey, marked by both progress and setbacks, continues to evolve. The AI landscape, much like the security scene, is a continual technological race between innovation and safeguarding against emerging threats. This dynamic, while evolving with each technological advancement, remains fundamentally similar to the challenges faced in the past.

From the organizations’ perspective, certain security basics can get them a long way to protect against advanced and automated threats. Organizations can control their threat surface by continuously identifying, assessing, and mitigating vulnerabilities across their digital and physical assets, networks, and human elements, leveraging automation to make the process continuous and evolve the processes as AI matures in this space. And while it might not be possible to catch every attack or zero-day exploit in the first layer of defense, adequate visibility and behavioral detection across the infrastructure should be able to find and alert on suspicious or anomalous activity before it is too late and allow for timely mitigation of the imminent threats.

The AI revolution of 2023 has set the stage for a future where generative AI's potential is boundless, yet its challenges are equally formidable. As AI continues to integrate into every aspect of our lives, the balance between leveraging its capabilities and protecting against its misuse becomes a paramount concern. The journey ahead is one of cautious optimism, where innovation is embraced, but vigilance remains a constant companion.